Greetings all —

Hope everyone’s as well as can be out there, after The Election. Personally, I spent much of last week sleep-deprived and fried, having come off a week-long flu (during which I streamed a deluge of horror movies) right into the election week chaos. And then I immediately got another nasty cold—the joys have having small kids in school—so I remain uncertain as to what is actually real, and what is a feverish, semi-hallucinated daydream, and I’m just going to revel in that for a while.

I’m working on a longer post about what the coming Trump years might hold for the tech world—the vast majority of it concerning, from an anticipated rise in surveillance tools required to enact mass deportation, the further entrenchment of tech monopolies, Elon Musk’s incipient reign as the nation’s most powerful government tech contractor, and a frenzied boom in crypto and defense tech that has already begun—but in the meantime, I want to touch on a story that’s cause for a bit of hope, in spite of everything.

I’m talking, like so many other tech pundits, about the ascent of Bluesky. The decentralized social network hit 15 million users last week, and claimed the no. 1 spot on the App Store, above ChatGPT and TikTok. At time of writing, it still held that spot, all thanks to users fleeing Musk’s X-né-Twitter, the introduction of some good and innovative features, and the refreshing sense that someone is actually successfully building a place on the internet with users, not consumers, in mind. In 2024, that is enough to qualify it as a nearly utopian project.

So many people were signing up for Bluesky every day last week that its servers were overloaded for intermittent stretches.

Bluesky has spent the last two years or so hovering on the verge of a breakthrough, primarily because it’s the go-to home for Twitter and X refugees. Musk bought Twitter in 2022; when he started re-platforming users previously banned for hate speech and harassment, boosting his own feed, introducing pay-to-play features, and so on, many fled the platform. Bluesky, which was co-founded by Jack Dorsey, had its first big moment in spring 2023, when a group of power users set up shop. I wrote about that moment for the Times; Bluesky felt freewheeling, weird, and open to low-stakes posting in a way social media hadn’t in years.

Since then, it’s grown in spurts and starts, attracted dedicated users, and enjoyed boom periods whenever Musk does something divisive, like taking X offline in Brazil rather than meet with regulators, or removing its blocking feature. But what happened this week is on a different level. X was already a toxic cesspool, brimming with misogyny, spam porn accounts, and door-to-door AI salesmen—but the fact that Musk helped elect Trump became too much for many users to bear—users are defecting, closing their accounts, and heading to Threads, Mastodon, or Bluesky.

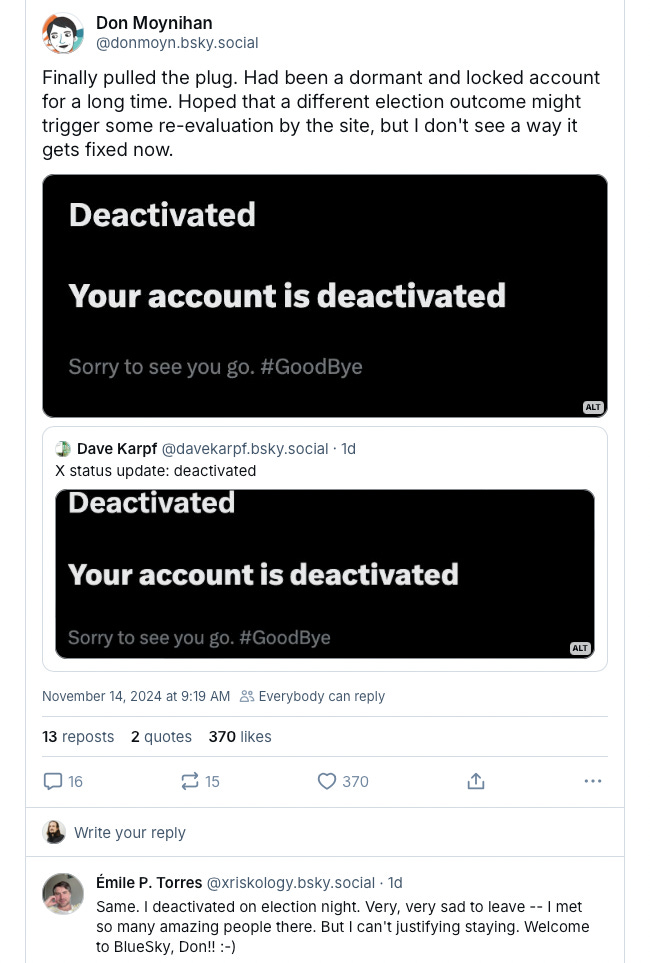

It’s become a badge of honor on Bluesky to post your X account deactivation screenshots, and I’ve lost hundreds, many thousands, of followers since the exodus began. And I’m happy for them! X is a mostly miserable place. But it’s also, as Malcolm Harris once remarked to me over coffee, where the fight is. And that’s never been more true; if you are a semi-unhinged person (like me) who uses the platform to comment, criticize or otherwise yell about the parade of injustices handed down by tech companies and billionaires, it’s still probably the best place to do that; its where your commentary on such matters is most likely to be seen where it might matter. (Whether or not posting has any such utility at all is a question for another day; I have been the butt of much scoffing and many an argument because I actually think it does, to a point.)

But for all other modes of posting—jokes, historical anecdotes, community-building, general news-sharing—Bluesky is better. Much better. And that’s where I hope to be spending most of my time going forward. And there are a few things fueling the boom this go round, besides the fact that it’s a Twitter clone that is not owned by Elon Musk.

Mostly, the starter packs. Bluesky lets users curate easily accessible and shareable lists of recommended users for those just joining the platform. This is a kind of ‘why don’t we put wheels on luggage’ sort of innovation that, in hindsight, it’s wild that no other social network had thought to do before. It serves two purposes: It gives new users a quick onramp onto the platform, and it gives users included in the packs new audiences; everyone wins. The biggest challenge any social media site faces is successfully activating the network effect—sites like Bluesky are only as good as the user bases they can attract, and sustain.

And thus the question of the week was: Does Bluesky have the juice? Max Read asked precisely that in his newsletter, concluding, essentially, that it has some juice but perhaps not quite enough to become whatever Twitter once was:

Bluesky acts more like a particularly large Discord server--a place to socialize, bullshit, banter, and kill time--than it does like a proper Twitter replacement. For many people I think this is fine; I’m not sure how much the world needs a “Twitter replacement” anyway. But the distinction is still important. Part of what’s made Twitter so attractive to journalists is that it’s relatively easy to convince yourself that it’s a map of the world. Bluesky, smaller and more homogenous, is harder to mistake as a scrolling representation of the national or global psyche--which makes it much healthier for media junkies, but also much less attractive.

Aja Romero and Adam Clark Estes are more sanguine at Vox, noting that it delivers the fun, weird vibes of old Twitter, and “could usher in a brighter future for social media.” Ryan Broderick goes further: “I’m comfortable saying that Bluesky won the title of The Next Twitter... Though, there is a bigger question that hangs over Bluesky. Which is, exactly how big can a text-based, non-algorithmic feed actually get?”

Those constraints are, in part, exactly what makes Bluesky appealing, Jason Koebler notes at 404 Media, in a piece called “the Great Migration to Bluesky Gives Me Hope for the Future of the Internet”:

the energy on Bluesky is exciting, that the app and website are very usable, and that, as a journalist, I appreciate a platform that does not and says it will not punish links in any algorithm and which mostly operates in reverse chronological order… What’s happening on Bluesky right now feels organic and it feels real in a way no other Twitter replacement has felt so far, and it feels better than X.com has been ever since Elon Musk took over.

I have used Bluesky a little bit here and there since logging on a year and a half ago, and while it’s always felt better, it’s also been lacking that element that Read highlights; the feeling of universality; that you really are interacting with a broad spectrum of voices. Now it’s getting closer, even if it still maintains an oversampling of a certain type of user—left-liberal, online hyper-literate, etc.

But even more than its relative success, what’s worth underlining, to me, is that it is succeeding amidst a tech ecosystem that is all-in on data extraction, algorithmically optimized ad tech, and the mass distribution of AI content. This is why so many journalists and techies and posters are cheering so loudly for the platform. (Also because the starter packs helped many of them acquire thousands of followers overnight, thus motivating them to write about them; another reason they are genius.)

Bluesky is building something for real people; it’s actually listening to what those users want, and tailoring their product and experience accordingly. Wild. And so you get things like a generally real-time, reverse chronological feed, a very customizable user experience, with a wide array of options for deciding how you want your content to be seen and who you want to be able to follow you, and you get responsive content moderation, even though this is surely abetted by the lower volume of posts.

You get promises that Bluesky will not throttle links, like X does, so creators and writers can share their work without fear of an algorithm penalizing them for doing so. That this is a selling point, three decades into the commercial internet—we will not punish you for sharing hyperlinks—is bleak in its own right, but that’s where we are. Bluesky also has a laudable enough stance on AI, stating that it does not train any AI models on posts, and does not intend to.

All this stuff—user customization, creator-friendly policies, and simple-but-smart ideas like the starter packs—amounts to a blast of fresh air to the face of an online world that is dominated utterly by extractive tech monopolies who long ago waved goodbye to any nominal notion about caring about the user. Mark Zuckerberg just said bring on the AI slop. X is an intensely hostile user experience—share a link to your writing, your artwork, a news article, anything, and its algorithm shows the post to fewer people. That’s to say nothing of the nonexistent content moderation and rampant racism. Google is flooding its search result pages with unreliable AI summaries, further diminishing the standing of news and human-written articles.

The online world has become so hostile to users that Bluesky’s pitch of ‘here is a straightforward feed of text-based user-generated posts that we promise not to mess with’ is revelatory. Its scaling model and raison d’être are a very rejection of the platforms that have colonized the rest of our digital lives, and relentlessly commodified them. No wonder everyone seems to be rooting for its success, even if there are, pointedly, no guarantees those ideals will remain in place.

Because look, Bluesky is far from perfect. It recently raised a $15 million series A funding round led by the ominously-named Blockchain Capital, though leadership promised it would “never hyperfinancialize the user experience” with NFTs or crypto, and stressed it would remain focused on the user. And I wish it would go as far as Mastodon in its federation, allowing users full interoperability, as Cory Doctorow has called for. (Mastodon is, we should note, by any count, the more properly utopian project; more user control, no weird VC cash, etc—for me, at least, it just sadly doesn’t have the same juice. Most of my network simply isn’t there!)

I wish, as I posted on Bluesky, that it would do even more than that, and take this bright opportunity to expand on what it’s already started—and work to ensure that the user experience that has generated so much excitement won’t erode when Blockchain Capital wants to see some returns on its investment. Why not get wild here, and show how truly committed to the user you are: Maybe introduce a mechanism for users to have a direct say in platform governance matters? A Bluesky User Board? Or instead of looking to monetize via paid features or ads, test voluntary subscription offerings, as Signal and Wikipedia do? Lots of users have already signaled they’d pay a monthly fee to keep Bluesky pristine. Look, a luddite can dream here.

I’m in agreement with Rob Horning and Nathan Jurgenson—the better question than ‘is Bluesky the next Twitter’ is ‘what else might Bluesky become’? What possibilities are latent here? There is excitement over a text-based social media network, in 2024, the age of TikTok and streaming; how might that be channeled?

Bluesky is giving hope to people who spend long hours online precisely because it is purporting to be, and so far succeeding, at least in its very short lifespan, in being everything that big tech is not. No AI spam, no glitchy ad tech, no link throttling, no malignant billionaire owner. Bluesky is not just tapping into this wellspring of goodwill because it promises a return to the halcyon days of *Twitter*—but a return to the days before ossified, rent-seeking tech monopolies drove our collective online experience to hell.